The Platform Nobody Counts: How Duda Slips Past Every Website Speed Study

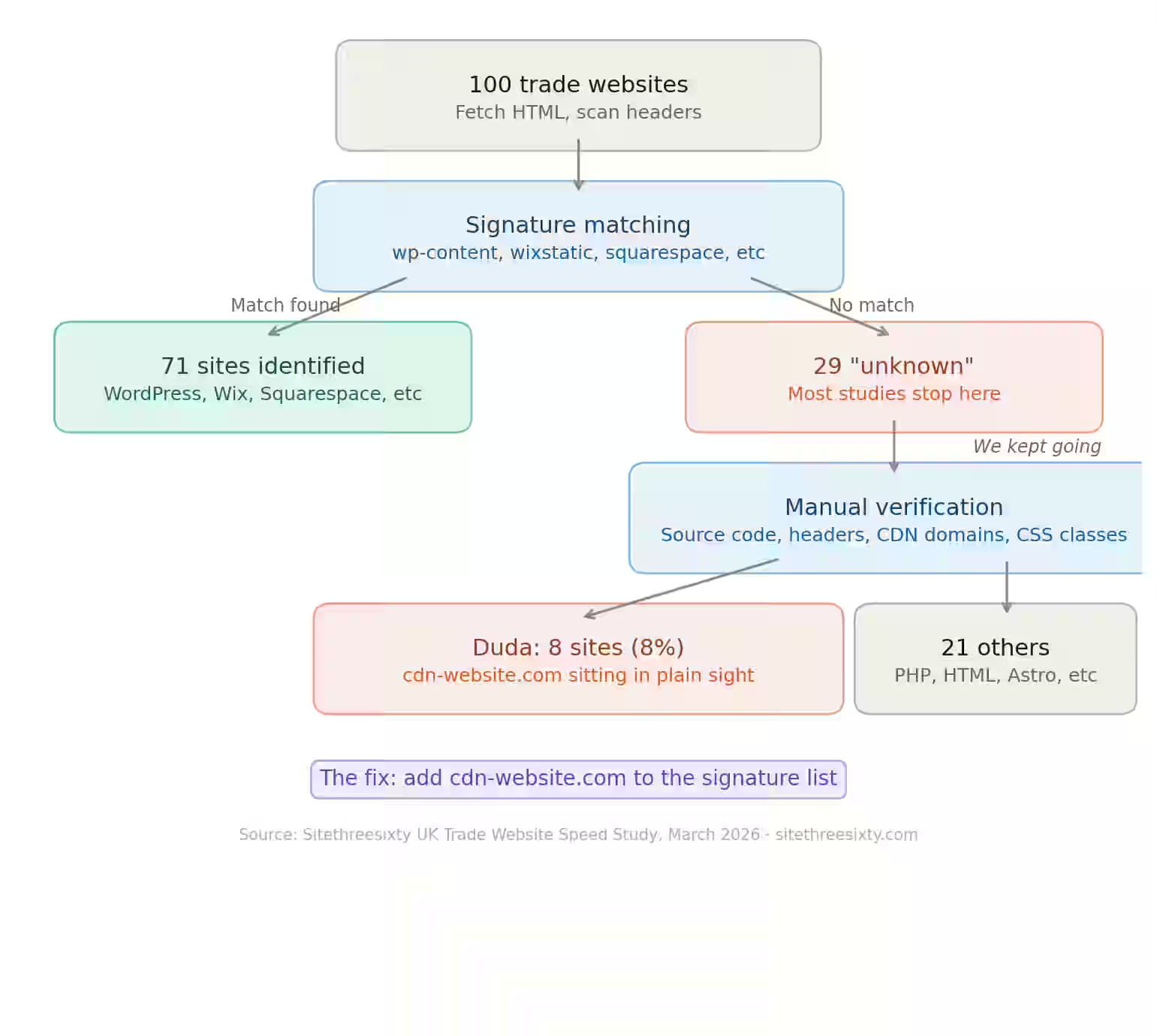

We ran a speed test on 100 trade websites across the UK—locksmiths, painters, plumbers—the usual suspects. We grabbed the top three organic Google results from 11 major cities. Nothing fancy, just honest work with Google PageSpeed Insights API.

To see what platforms these sites were built on, we used automated detection: HTTP headers, HTML source checks, generator meta tags. WordPress, Wix, Squarespace—they’re easy to spot. Everyone does these checks.

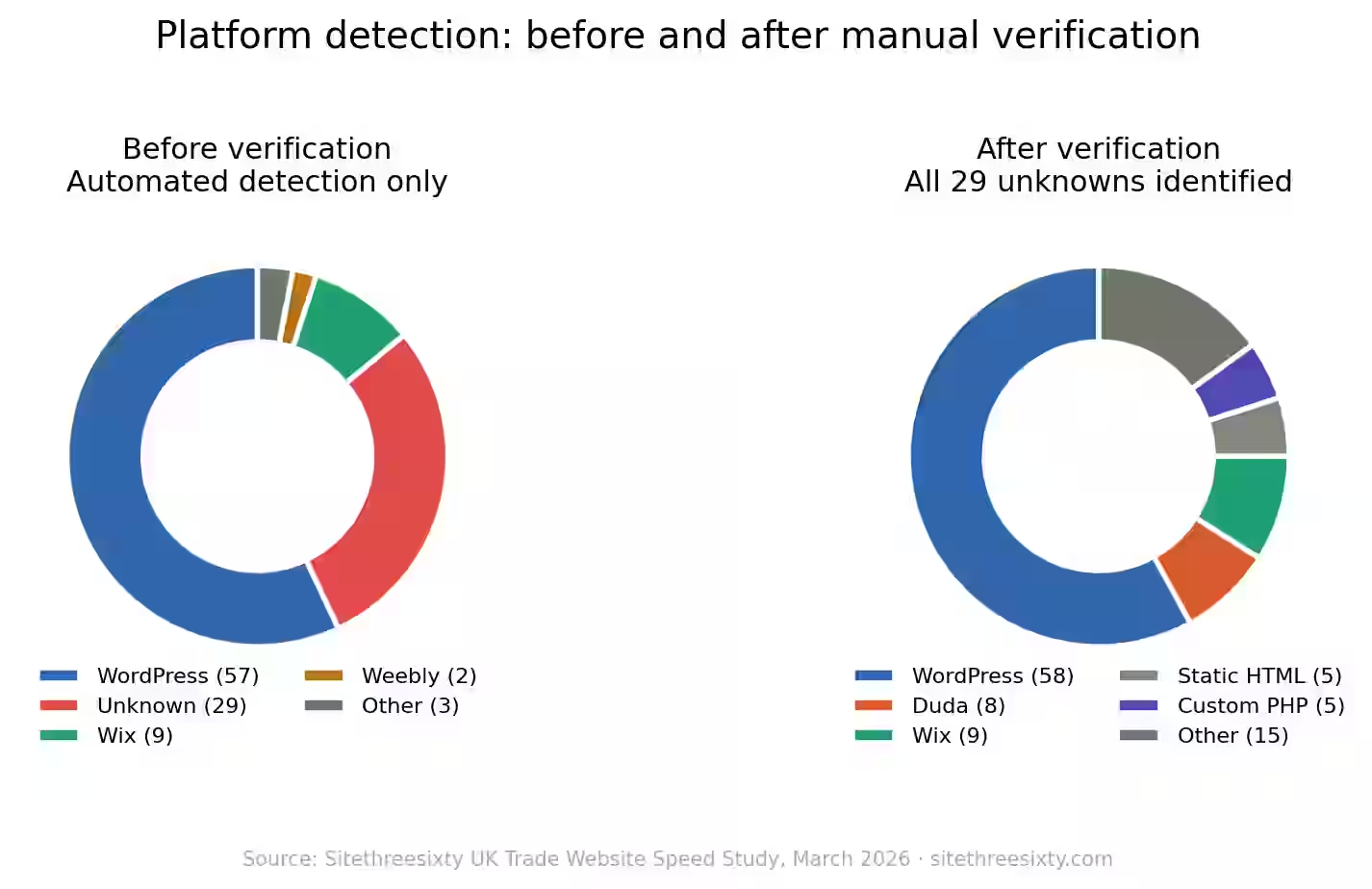

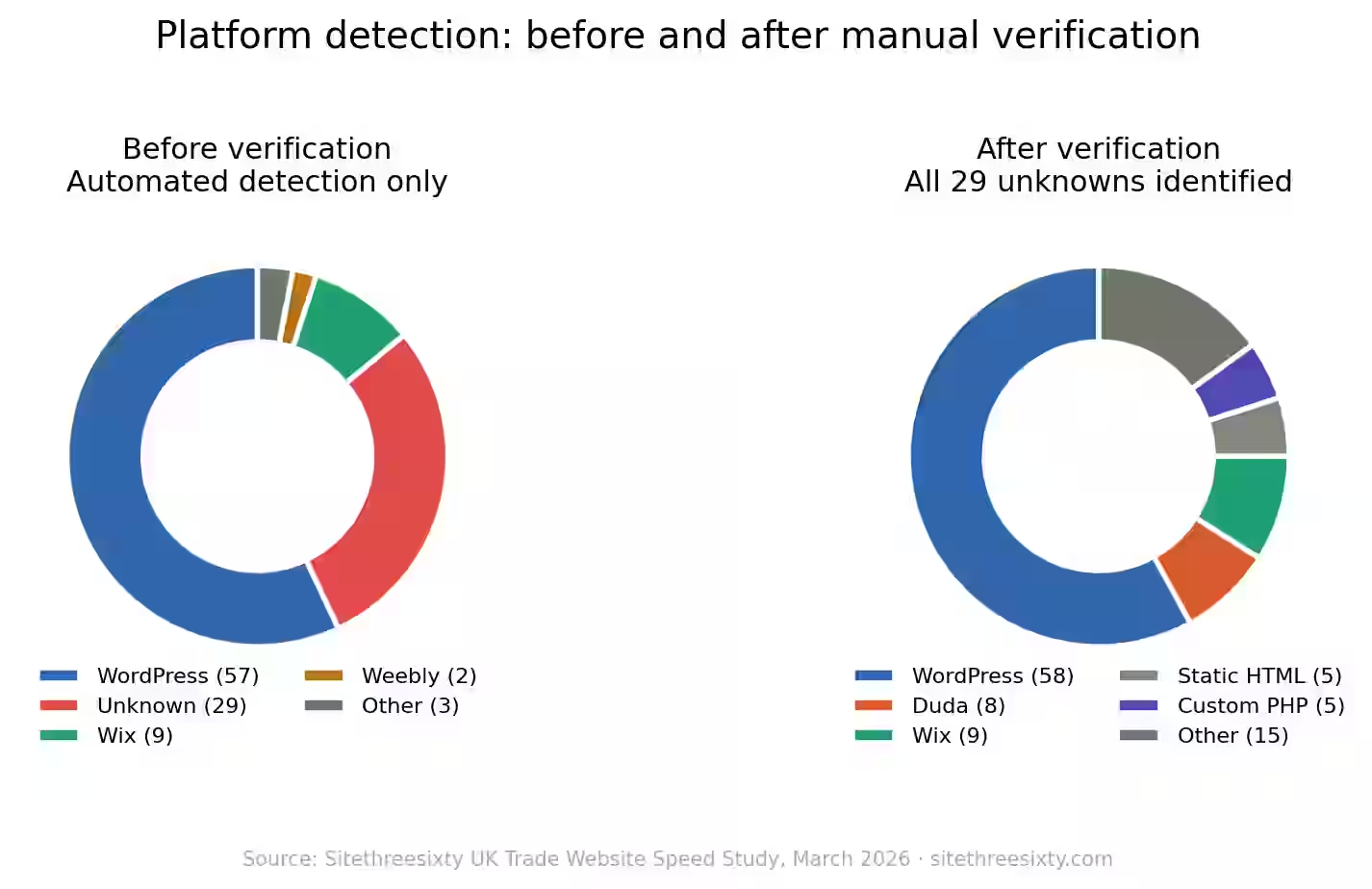

But then, 29 out of 100 sites came back as “custom / unknown.” That’s nearly a third. And honestly, we didn’t buy it.

Why 29% “Unknown” Feels Wrong

When almost a third of your data is “unknown,” you’re not really finding anything new. You’re just admitting your detection method has holes. Most studies would stick with “custom / unknown” as a category, pop it in a pie chart, and move on. WordPress looks dominant, Wix’s share seems legit, and nobody really questions the leftovers because nobody expects absolute accuracy when identifying obscure platforms.

We weren’t happy with that. So we checked all 29 “unknown” sites by hand. We dug through the source code, headers, URL structures, and hit up BuiltWith and Wappalyzer. If it still wasn’t clear, we called it.

What We Actually Found

Here’s how those 29 “unknown” sites broke down:

- Duda: 8

- Static HTML: 5

- Custom PHP: 5

- WordPress (hidden): 1

- And a random handful of others: Webflow, Next.js, Astro, Carrd, it’seeze, BUILT, Google Sites, one.com, GoHighLevel, plus one truly custom-built site

Duda alone showed up on eight sites—more than Squarespace, Shopify, Joomla, or Weebly combined. After manual correction, Duda matched Wix at about 8–9% of these UK trade sites. You’d never spot that with just automated detection.

How We Picked Out the Duda Sites

Let’s break down our process.

First, the automated script. It pulled raw HTML using Chrome’s user-agent, then looked for platforms by matching against hardcoded signatures like

/wp-content/, wixstatic.com, squarespace.com, shopify.com—names everyone recognises.But Duda wasn’t on the list, nor were it’seeze, BUILT, Carrd, GoHighLevel, and a few others we later found. So if the script didn’t see a familiar pattern, it dumped the site into “custom / unknown.”

No online detection tools at this point. No JavaScript rendering. Just string matching.

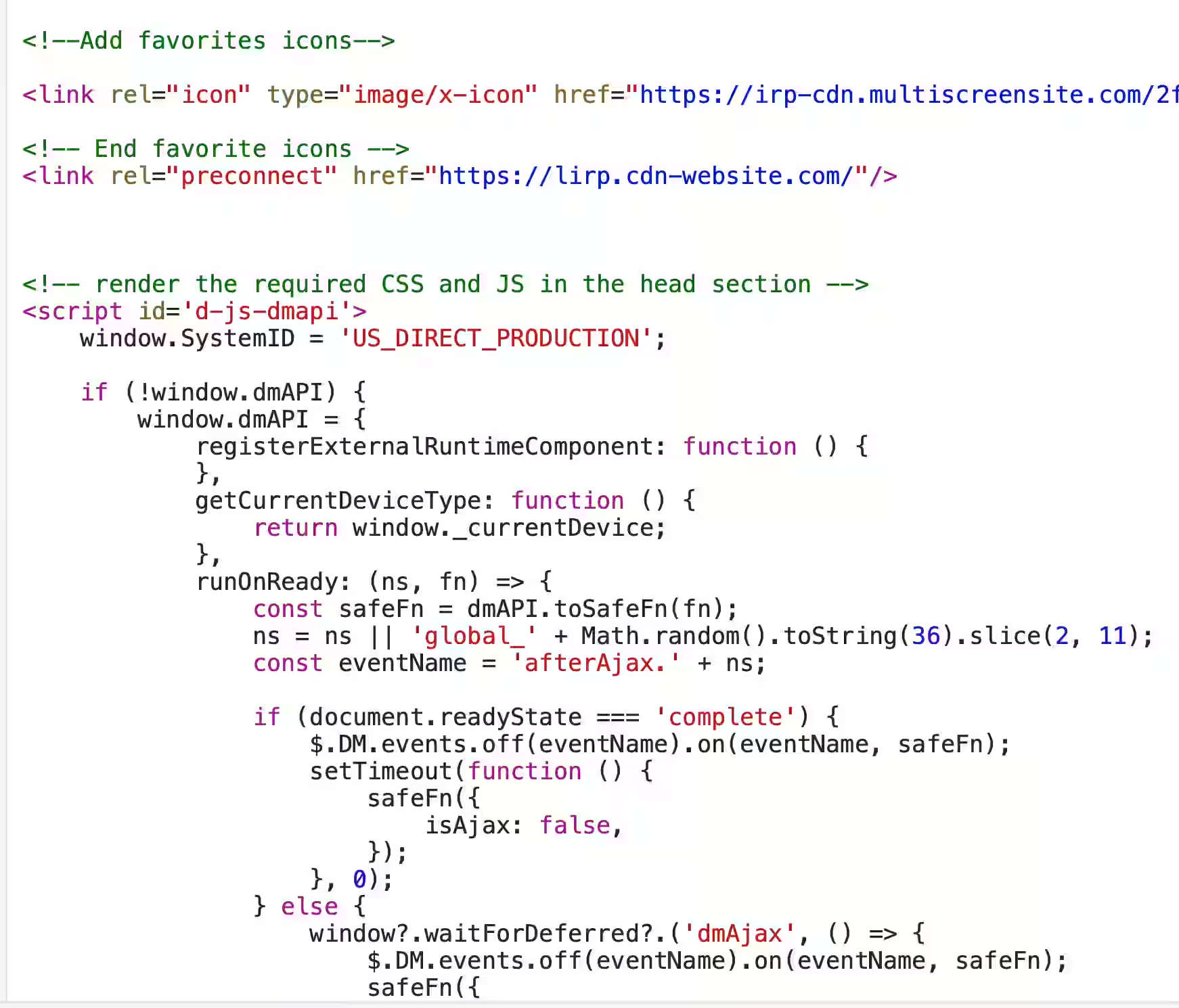

Next, manual checks. For those 29 mystery sites, we fetched HTML and looked for quieter clues: CDN domains, weird CSS class names, meta tags, file extensions, script references, and footer credits.

We tried BuiltWith, Wappalyzer, and WhatCMS too, but they block automated scraping with CAPTCHAs. They work fine if you visit them manually in a browser and type in a URL—but for bulk automated verification, you’re out of luck. For 29 sites you could do it by hand in half an hour, but our verification process stuck to direct source code inspection instead.

Now, here’s the kicker: seven out of eight Duda sites blocked our usual tool with a 403. The site’s WAF shut us out. We got past it by using

curl with a browser user-agent—then grabbed the HTML and spotted Duda’s unique dm* CSS classes.Two sites blocked everything. We just opened them in the browser and checked the source.

Two Ways Duda Hides

Duda doesn’t just slip through the cracks—it’s practically invisible in two ways.

First, nobody’s looking for it. Detection scripts only check for big names. Duda isn’t on most lists, so it’s ignored by default.

Second, even if you go searching, automation can’t break through. Duda’s WAF blocks most automated probes. Sites return a 403. Tools like BuiltWith, Wappalyzer, and WhatCMS serve CAPTCHAs to automated queries—they’re built for humans in a browser, not for scripts running at scale. A person sitting at a desk could identify Duda in minutes using these tools. But any study running hundreds or thousands of sites isn’t doing it manually. They’re automating it. And the automation gets blocked.

Most studies never get past the first layer. Whatever doesn’t match goes in “other.” The few who dig deeper run into layer two, throw up their hands, and walk away.

The result: Duda barely gets counted anywhere.

Why It Matters

Think about every platform share report. Duda’s real slice is invisible. If your detection tool doesn’t look for Duda, or its WAF blocks you, you lump the site into “other.” WordPress’s share ends up higher. “Custom” looks inflated. Duda’s actual presence—especially among agency-built sites—is wiped out.

This isn’t just our study. Any automated platform verification runs into the same wall. If you don’t list the platform and your fallback tools get blocked, you miss it.

Who Else Is Ghosting?

Duda isn’t the only one. We ran into similar problems elsewhere.

One WordPress site stripped out every tell: no

/wp-content/, no generator meta tag, nothing obvious. It ran Slider Revolution, a WordPress-only plugin, which gave it away—but without a thorough check, we’d have missed it. Plugins like Hide My WP, Perfmatters, Swift Performance—they can mask WordPress in source output entirely.CAPTCHA blocks aren’t unique to Duda either. BuiltWith, Wappalyzer, WhatCMS—they blocked automated access for every site in the study, not just Duda ones. These tools are designed for humans in browsers, and they work perfectly that way. But the classic “just use BuiltWith as backup” assumption in most study methodologies doesn’t hold up once you try to automate it at any kind of scale.

Automated detection always misses things. The question is, how much is slipping through—and does anyone publishing platform stats actually account for these big blind spots?

Why This Screws Up Speed Comparisons

When you see articles comparing “WordPress vs Wix vs custom speed” where platforms are detected automatically, the data probably isn’t right.

Not because the speed scores are off, but because the platform info is. Sites that should be marked as Duda end up “custom.” Some WordPress sites wind up “unknown.” So when you average speed scores for each platform group, you’re really comparing apples to oranges—nobody’s got the right roster.

In our original detection, WordPress sites had an average mobile score of 64—so did “custom/unknown.” Seemed meaningful: “WordPress equals custom performance.”

But after manual checks, that “custom/unknown” bin scattered across 16 platforms. The whole comparison fell apart. That bin wasn’t a real group—it was just everything the detector couldn’t figure out.

The Takeaway

If you want real platform performance data, check your assignments. Automation is just the starting point.

For smaller studies—less than 500 sites—manually confirm your “unknowns.” It’s doable and makes the data way better. For bigger studies, at the very least, tell people how big your “unknown” bin is and be honest about the detection limits.

Reporting “30% custom/unknown” as a platform is just misleading. It’s not a fact about the web—it’s a fact about how your detection tool works.

Our Methodology

We looked at 100 UK trade sites for locksmiths, painters, and plumbers from 11 big UK cities, taking the top 3 Google organic results each time.

Automated platform detection first (HTTP headers, HTML source signature matching), then manual verification for all 29 unidentified sites using source code inspection, URL structure analysis, and

curl with browser headers for sites that blocked standard fetch requests. BuiltWith and Wappalyzer were attempted as automated cross-references but blocked access via CAPTCHAs—these tools work fine manually in a browser but can’t be called programmatically at scale.Full results—speed scores, Core Web Vitals, and tech checks—are published in our companion article: “We Tested 100 UK Trade Websites—94% Fail Google’s Own Speed Standards.”

All data is anonymised. No business names or URLs published. We keep raw data internally.

About

This research is from Sitethreesixty. We build and manage sites for trades and small service businesses in the UK and US.

We’re publishing this because, frankly, we want the web industry to be more honest about its data. If your methodology has a blind spot, admit it. If your “other” category is just a mix of everything you couldn’t identify, don’t pretend it’s some valuable finding.

If you want to talk about our methodology, verify our results, or you’ve hit the same Duda detection issues—reach out.

Data collected March 2026. Platform detection checked manually for every site that automation couldn’t identify.